Broadcom 1QFY26 Earnings, From Discipline Discount to Prime Contractor

How the refusal to play the AI hype game became the ultimate moat

TL;DR

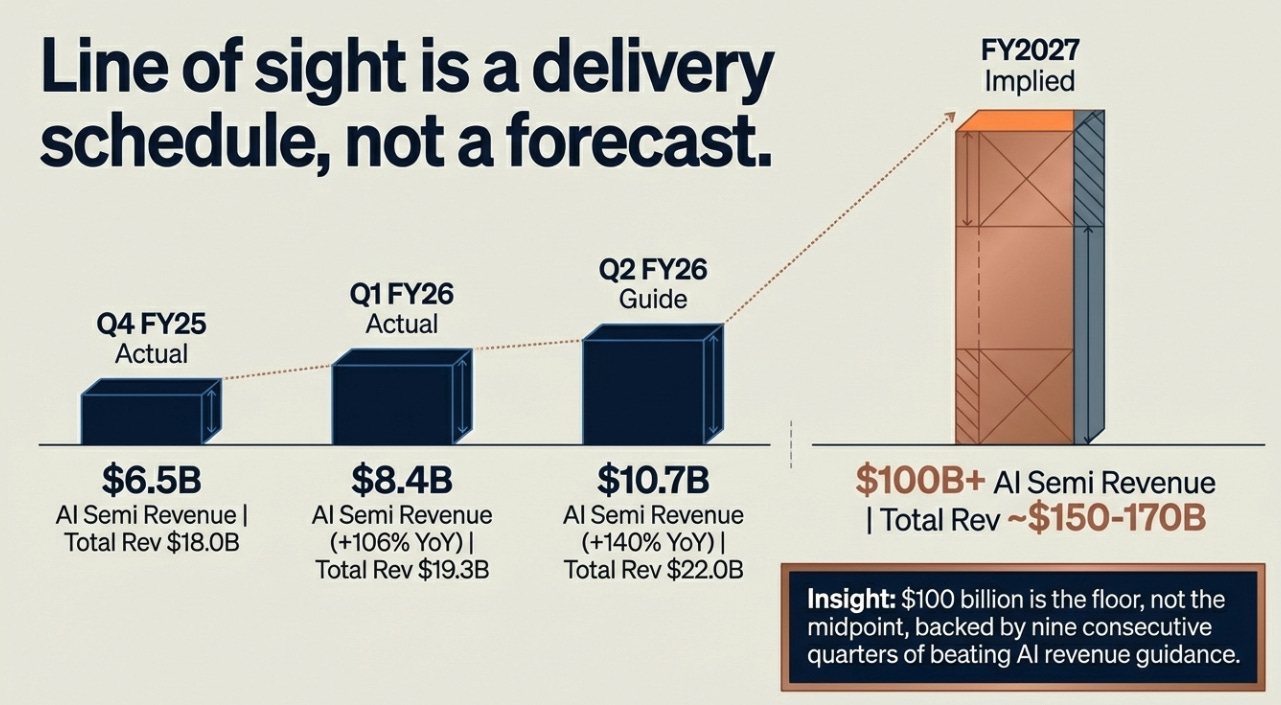

Broadcom’s $100B AI revenue “line of sight” for 2027 isn’t guidance, it’s a logistics statement backed by supply chain commitments and binding customer deployments.

Hyperscaler “insourcing” doesn’t displace Broadcom; it deepens dependence because Broadcom industrializes custom silicon at scale.

The market still values Broadcom as a semiconductor cyclical while it is increasingly the infrastructure layer underneath hyperscaler AI.

From Bloomberg:

“Broadcom reported first-quarter revenue of $19.31 billion, beating analyst estimates, with AI chip sales doubling year-over-year to $8.4 billion. The company guided for second-quarter revenue of approximately $22 billion, nearly $1.5 billion above Wall Street expectations, and disclosed that it has line of sight to more than $100 billion in AI chip revenue in fiscal 2027.”

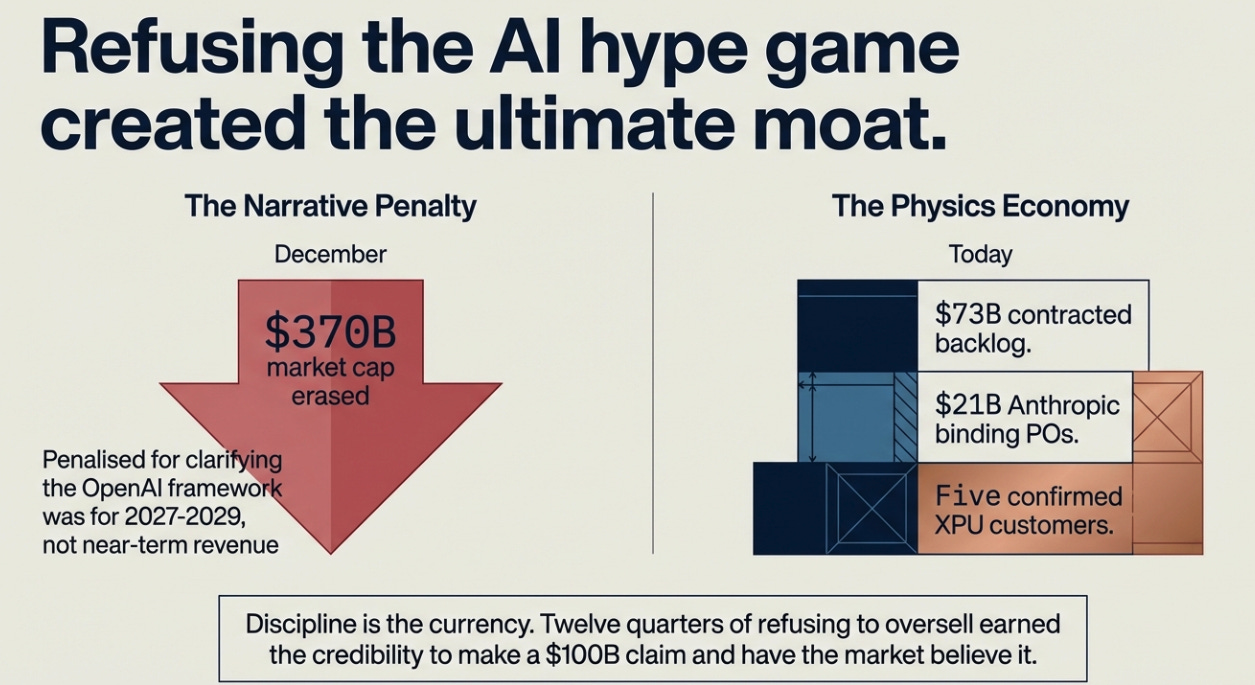

In December, Broadcom reported a strong quarter, guided conservatively, and watched $370 billion of market cap disappear. The reason was a single clarification: the OpenAI deal was a framework for 2027-2029, not near-term contracted revenue. Hock Tan had separated what was real from what was hoped for, and the market, drunk on AI narrative, treated honesty as a miss.

We wrote at the time that the selloff was a category error. Broadcom lived in the physics economy: $73 billion in contracted backlog, $21 billion in Anthropic binding purchase orders, five confirmed XPU customers with delivery schedules. The stock wasn’t pricing any of that accurately because the market was busy pricing a different company, one that played the narrative game, and Broadcom kept refusing to be that company.

What we got right: the discipline discount was real and it was an opportunity. What we underestimated: discipline, once established as the operating mode, doesn’t just correct a mispricing. It creates a new kind of leverage. Tonight Hock Tan used twelve quarters of credibility to say something that would have been dismissed as promotional coming from anyone else: Broadcom has line of sight to more than $100 billion in AI chip revenue in a single fiscal year. In prepared remarks. Reviewed by legal. Backed by a supply chain already booked through 2028.

The market believed him. That is the whole story.

What “Line of Sight” Actually Means

The phrase is doing a lot of work. “Line of sight” is not a forecast. It is not guidance. It is Broadcom’s way of saying: we can see the delivery schedule from here, and the delivery schedule adds up to $100 billion.

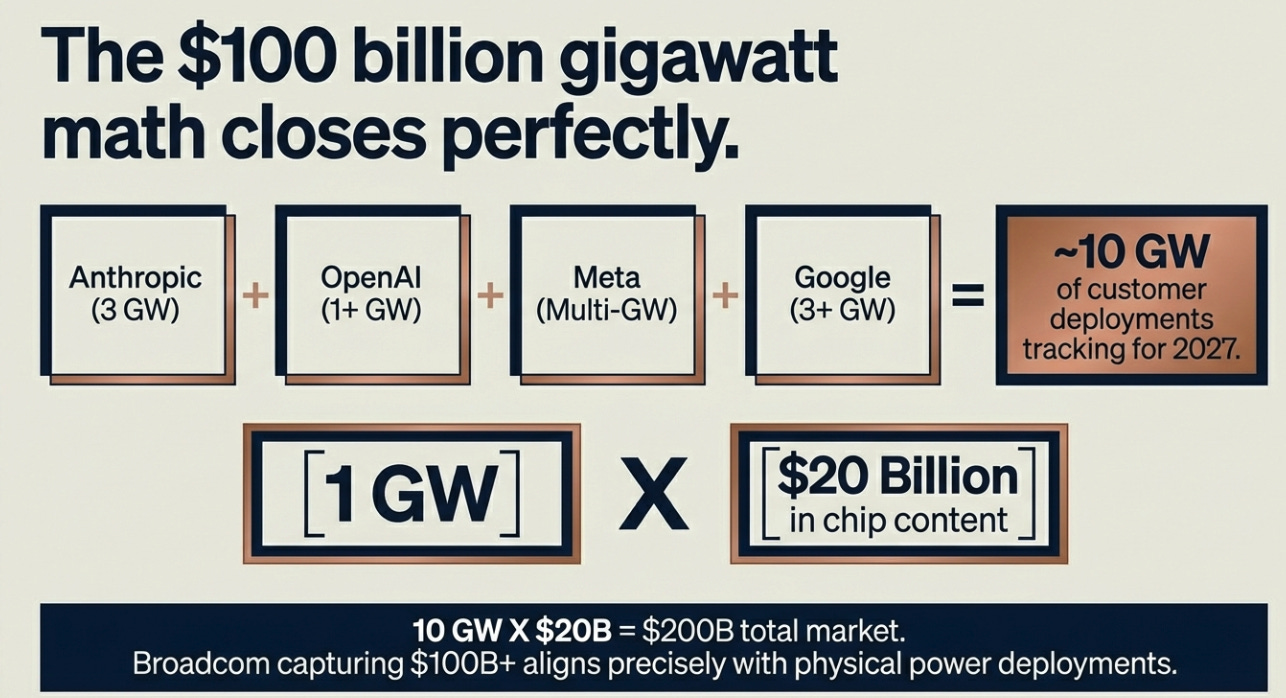

The mechanics are worth understanding because they explain why this number is more grounded than it sounds. When Bernstein’s Stacy Rasgon walked through the gigawatt math on the call, Anthropic 3 GW in 2027, OpenAI 1+ GW, Meta multiple GW, Google 3+ GW, Tan confirmed the aggregate is tracking toward 10 GW of customer deployments. Each gigawatt of compute represents roughly $20 billion in chip content. The arithmetic closes. The $100 billion is the floor, not the midpoint, and Tan’s language was explicit: “we have also secured the supply chain required to achieve this.”

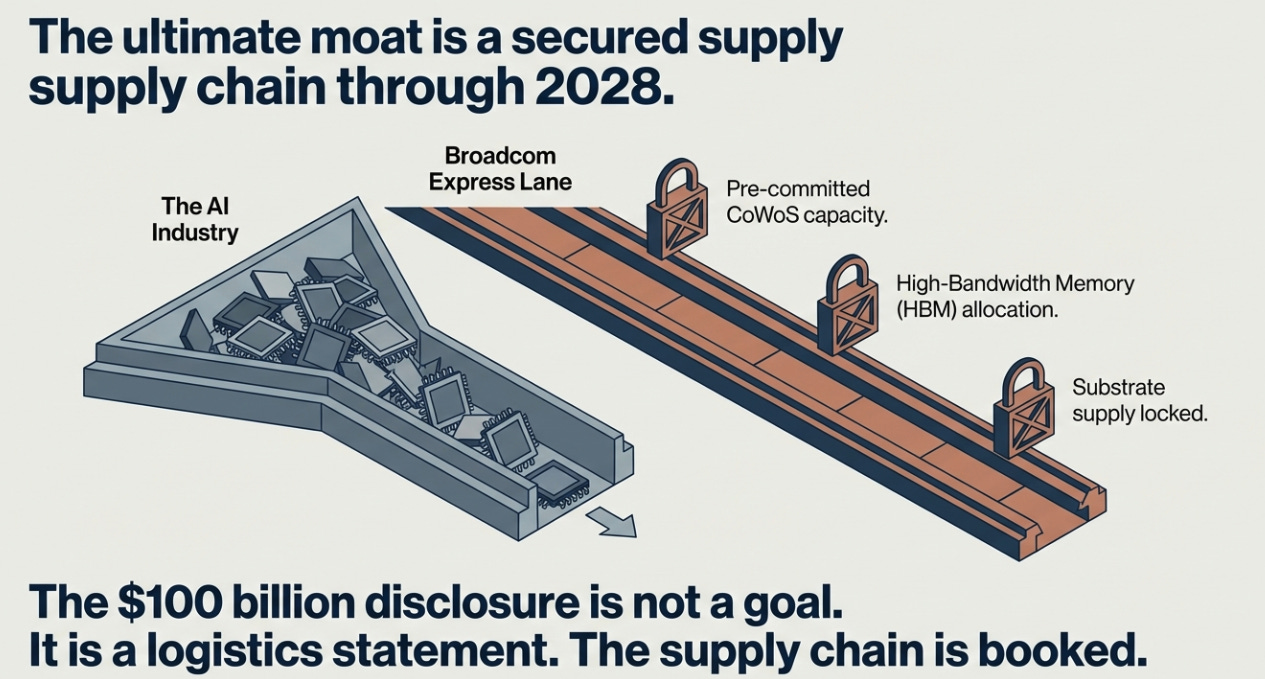

That last sentence is the one nobody followed up on, and it is arguably more important than the number itself. Broadcom has pre-committed CoWoS capacity, High-Bandwidth Memory allocation, and substrate supply through 2028. In a world where every AI infrastructure company is competing for the same constrained manufacturing inputs, Broadcom has already reserved the majority of the relevant capacity for three years. The $100 billion disclosure is not a goal. It is a logistics statement. The supply chain is booked. The customers have committed. The question is execution, and Broadcom has beaten its AI revenue guide every quarter for nine consecutive quarters.

The reason this credibility exists, and this is the part the market still underweights, is precisely because of December. The CEO who told investors the OpenAI deal wasn’t near-term revenue, at personal cost, is the same CEO now saying $100 billion in 2027 is visible. That’s not a coincidence. Discipline is the currency. Tonight Tan spent some of it, and the market’s willingness to take the number seriously is the return on twelve quarters of refusing to oversell.

Why Insourcing Makes Broadcom More Valuable, Not Less

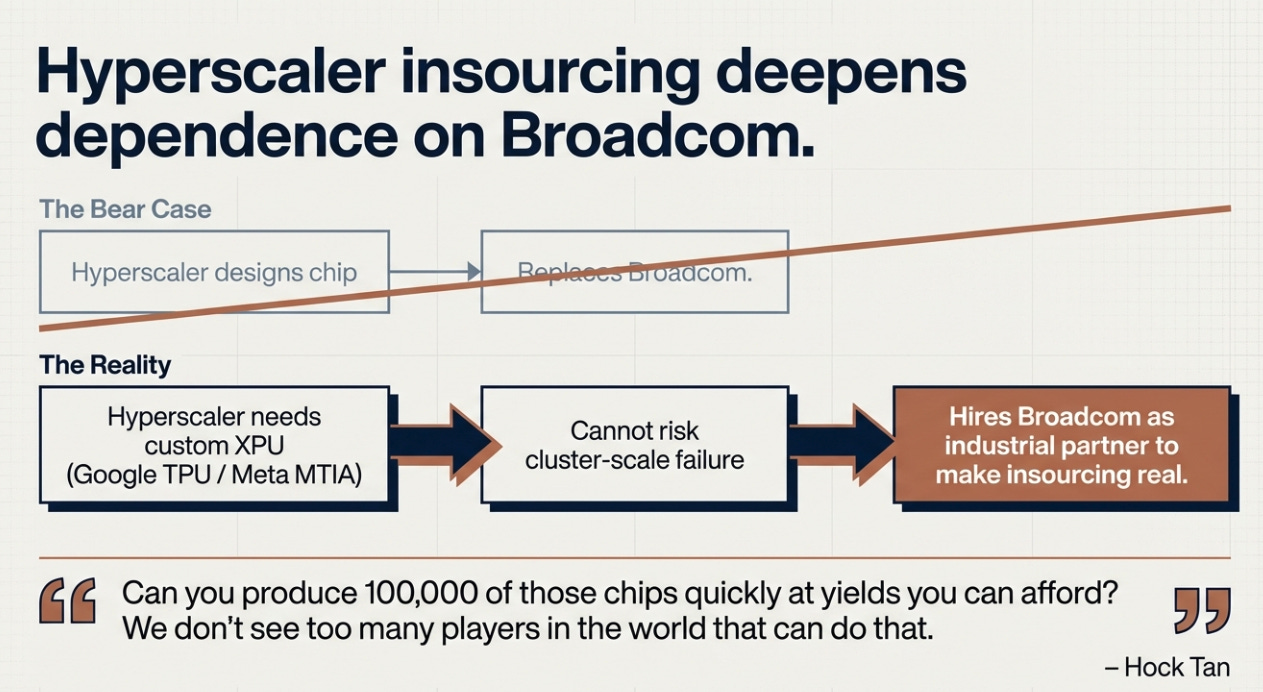

The bear case that has followed Broadcom for three years goes like this: Google has TPUs. Meta has MTIA. Amazon has Trainium. OpenAI is building its own silicon. Eventually these internal programs mature, volume moves in-house, and Broadcom’s economics compress. The customer is the competition.

I think this has the causality exactly backwards, and the reason it’s backwards explains why Broadcom’s moat is structurally different from what most people think it is.

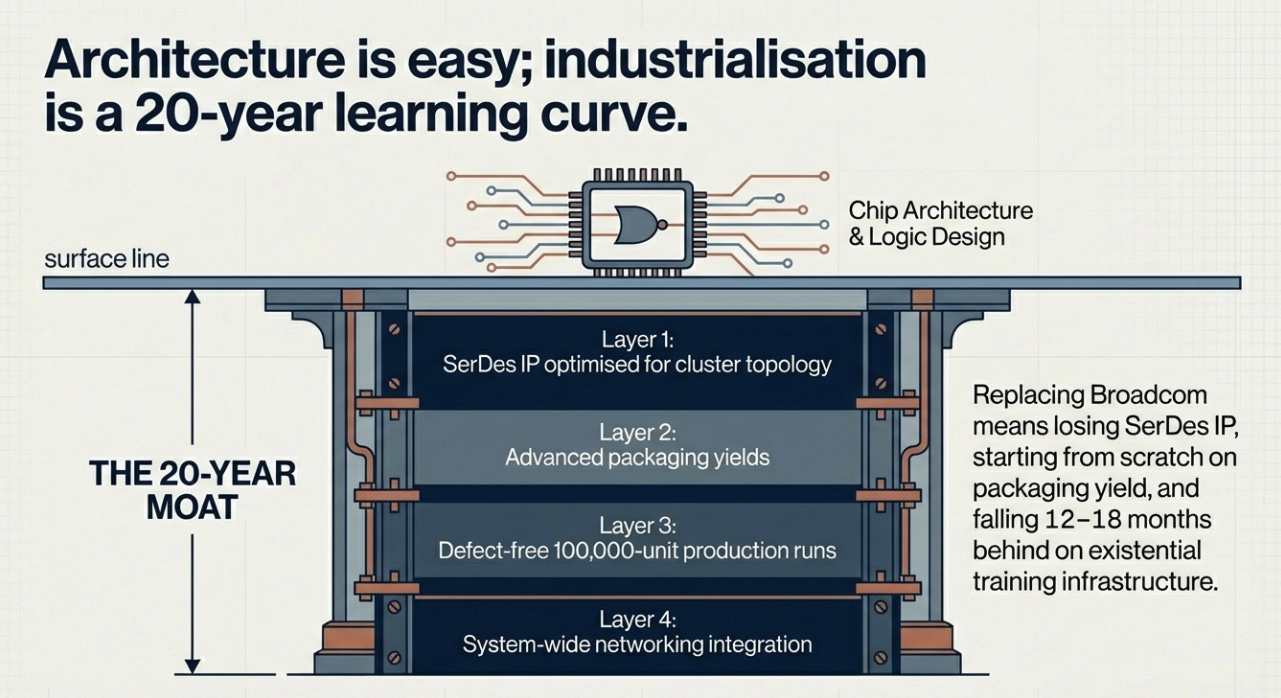

The mistake is assuming the hard part of custom silicon is the architecture. It isn’t. Google has been designing TPUs since 2016. They are genuinely good chips. But Google still uses Broadcom, not because they can’t design the silicon, but because the hard part is everything that comes after the architecture: SerDes IP optimized for their specific cluster topology, advanced packaging at yields that make the economics work, 100,000-unit production runs without defect cascades, networking integration across the entire compute cluster, and the ability to do all of this on a roadmap that doesn’t slip. Tan described it plainly when asked about customer-owned tooling competition: “Can you produce 100,000 of those chips quickly at yields you can afford? We don’t see too many players in the world that can do that.”

This is a 20-year learning curve. It cannot be acquired by hiring engineers or replicated by running a program for three years. And here is the structural implication: the more strategically important custom silicon becomes to hyperscalers, the more their competitive position in AI depends on their XPU program working, the less they can afford to experiment with alternatives to Broadcom. Failure at cluster scale is not a bad quarter. It is an existential setback. When Google’s Ironwood TPU program is co-designed with Broadcom, replacing Broadcom doesn’t mean finding a better chip vendor. It means rebuilding the entire development relationship, losing the SerDes IP, starting from scratch on packaging yield, and falling 12-18 months behind on the training infrastructure the business depends on.

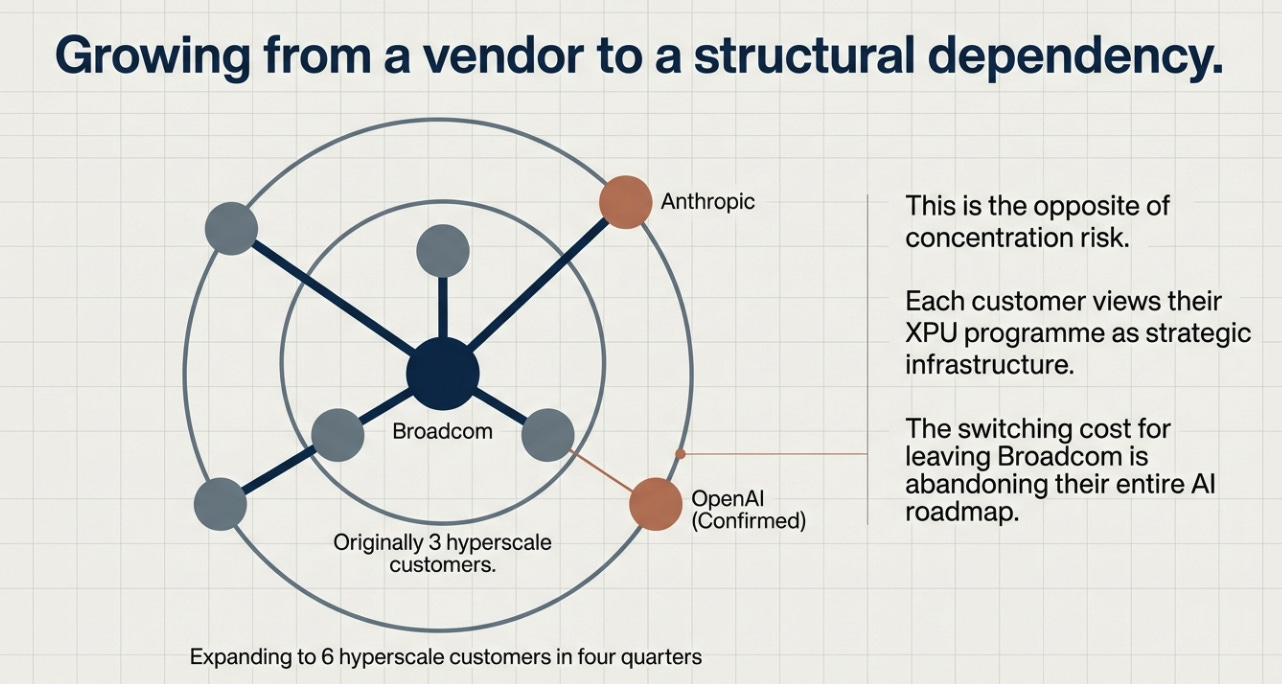

This is why the customer list expanding from three to six in four quarters is the opposite of a concentration risk. Each new customer, Anthropic, then the implied fifth, now OpenAI confirmed tonight, adds revenue while deepening the organizational muscle required to run parallel deep-engagement programs simultaneously. Broadcom now has six hyperscale customers who each view their XPU program as strategic infrastructure rather than a vendor relationship. The switching cost is the entire AI roadmap. That is not a chip vendor. That is a structural dependency.

The sixth customer confirmation also directly addresses the most specific bear argument from last quarter. Meta’s MTIA program, cited in several analyst notes as evidence of hyperscaler insourcing displacing Broadcom, was defended explicitly tonight: “alive and well, we’re shipping now.” The insourcing thesis didn’t displace Broadcom. It hired Broadcom as the industrial partner to make insourcing real.

The Asset Nobody Is Pricing

There is a piece of tonight’s print that received almost no analyst attention, and it may be the most important element for anyone thinking about where the stock goes over three years.

AI networking, Tomahawk switching, SerDes, DSPs, represented 33% of Q1’s $8.4 billion AI revenue, approximately $2.8 billion. In Q2, it is trending to 40% of $10.7 billion: roughly $4.3 billion in a single quarter. Annualized from Q2, that is $17 billion of AI networking revenue, growing faster than the XPU business, captured by a product, Tomahawk 6 at 100 terabits per second, that has no competitive equivalent in market today.

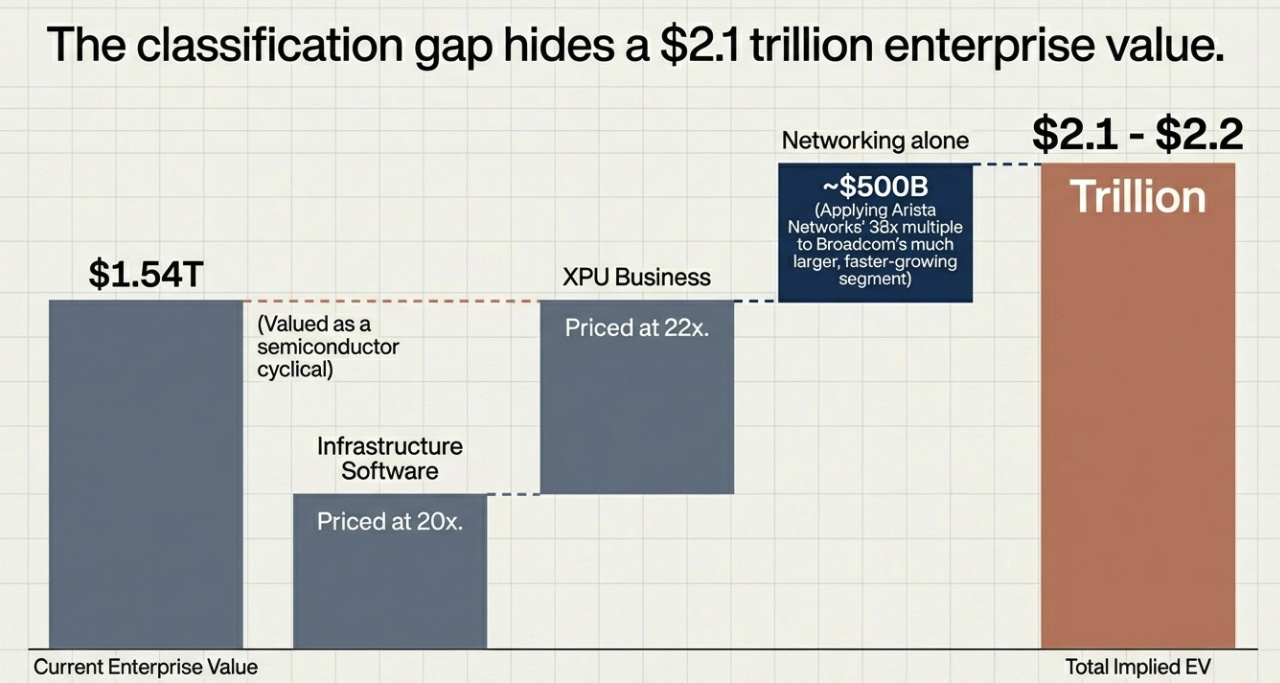

Arista Networks is the closest comparable. Arista trades at approximately 38 times forward earnings on $8 billion of annual revenue growing at 25%. Broadcom’s AI networking business is more than twice as large, growing faster, and receives zero standalone valuation credit because it sits inside the semiconductor segment disclosure. If you apply Arista’s multiple to Broadcom’s AI networking business, networking alone is worth roughly $500 billion. Add the XPU business at 22 times, infrastructure software at 20 times, and the sum-of-parts reaches $2.1-2.2 trillion against a current enterprise value of $1.54 trillion. The gap is not a precision argument. It is a classification argument. Broadcom is being valued as a semiconductor cyclical when it is simultaneously a networking monopolist growing faster than any pure-play networking company, an XPU franchise with $100 billion in confirmed 2027 visibility, and a software annuity generating 78% operating margins.

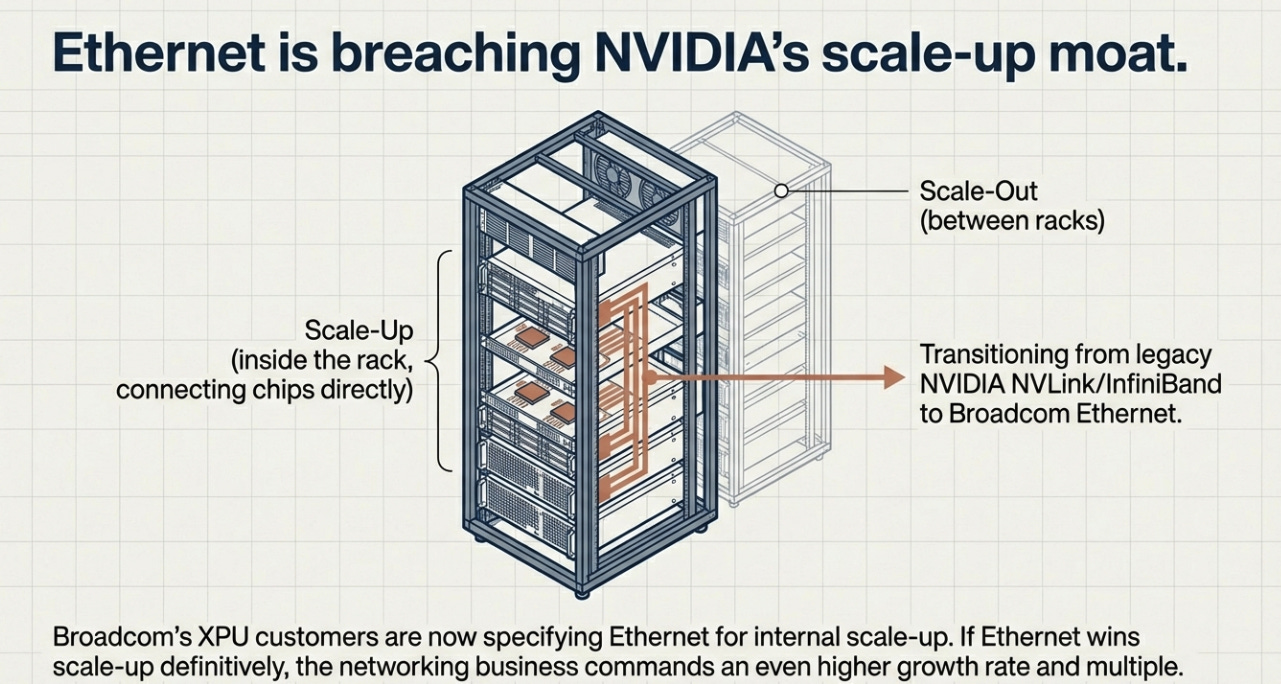

Charlie Kawwas disclosed something in Q&A that made this gap wider still. Scale-up networking, connecting chips within a rack, previously dominated by NVIDIA’s NVLink and InfiniBand, is now being specified as Ethernet by Broadcom’s XPU customers. “A lot of the XPU designs we’re doing, we’re being asked to scale up through Ethernet.” This extends Broadcom’s networking moat into the one architectural layer where NVIDIA had been structurally protected. If Ethernet wins scale-up as definitively as it has won scale-out, the networking business grows faster and commands a higher multiple than current estimates support.

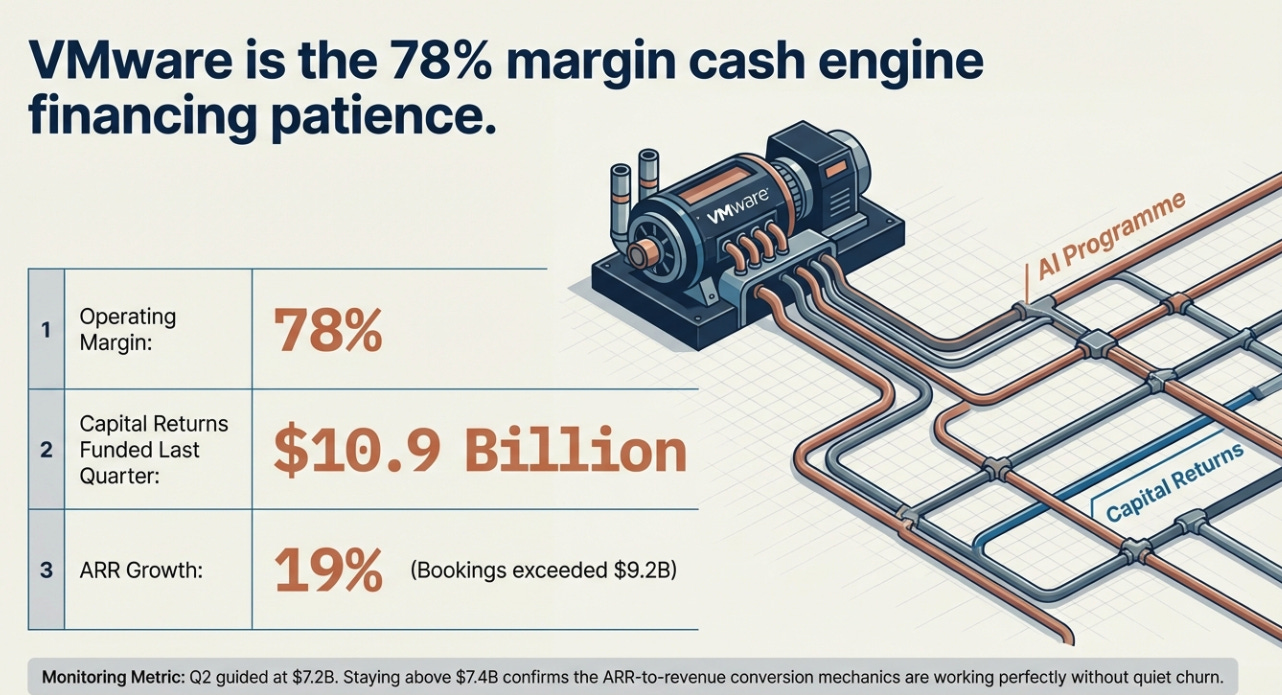

The VMware piece is simpler. Software grew 1% year-over-year in Q1, the one number that wasn’t clean, but ARR grew 19% and bookings exceeded $9.2 billion, consistent with subscription conversion mechanics that defer reported revenue. Q2 is guided at $7.2 billion, implying 9% growth. The monitoring item is straightforward: above $7.4 billion confirms the ARR-to-revenue conversion is working; two consecutive misses requires genuine re-examination of whether VMware pricing pressure is generating quiet churn rather than the delayed recognition management describes. VMware is not the thesis. It is the 78%-margin cash engine that funded $10.9 billion in capital returns last quarter and allows Broadcom to run the AI program without treating the balance sheet as a constraint. It finances patience.

Three Futures

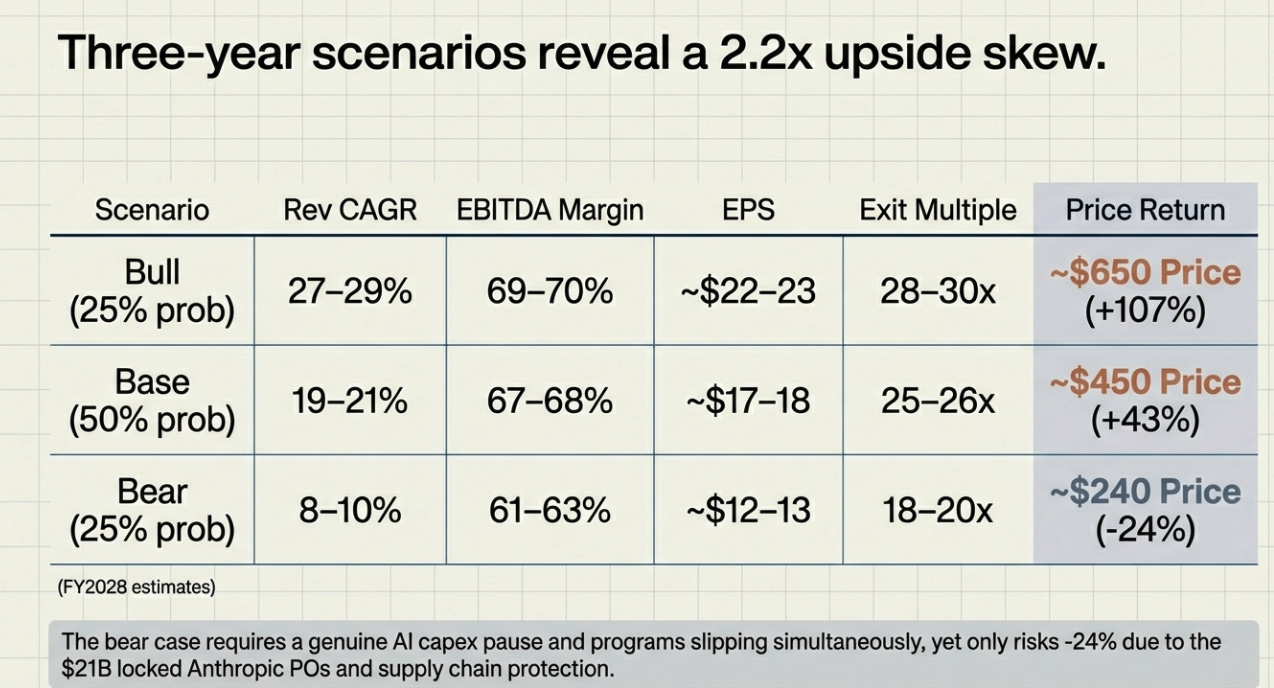

The honest version of a three-year scenario analysis acknowledges that the range is wide and the assumptions are explicit.

The bull case requires the $100 billion 2027 disclosure to deliver as described, not perfectly, but within range, and for the market to re-rate Broadcom toward infrastructure platform multiples rather than semiconductor cyclical multiples. OpenAI ramps to 3+ GW in 2028, a seventh customer is added, networking mix holds above 40%.

The base case is what happens if the $100 billion is real but lumpy, VMware grows modestly, gross margins hold at 77%, and the market remains in “show me” mode through FY2027 before partially re-rating on delivery. This is the scenario where Broadcom keeps executing and the stock closes roughly half the valuation gap implied by the SOTP analysis.

The bear case requires a genuine AI capex pause, not a sentiment wobble but a real pullback in hyperscaler compute spending, combined with at least one major customer program slipping a generation. This is the scenario where Broadcom’s supply chain commitments become inventory exposure and the multiple reverts toward cyclical semiconductor territory. It requires several things going wrong simultaneously against the backdrop of $21 billion in Anthropic binding purchase orders and supply chain committed through 2028. Possible. Not the base case.

The upside/downside skew at 2.2x argues for the position. The bear case floor at $240 assumes the supply chain lockup and binding POs provide no protection. The bull case ceiling at $650 assumes only modest multiple expansion relative to what the networking business alone would justify on a standalone basis.

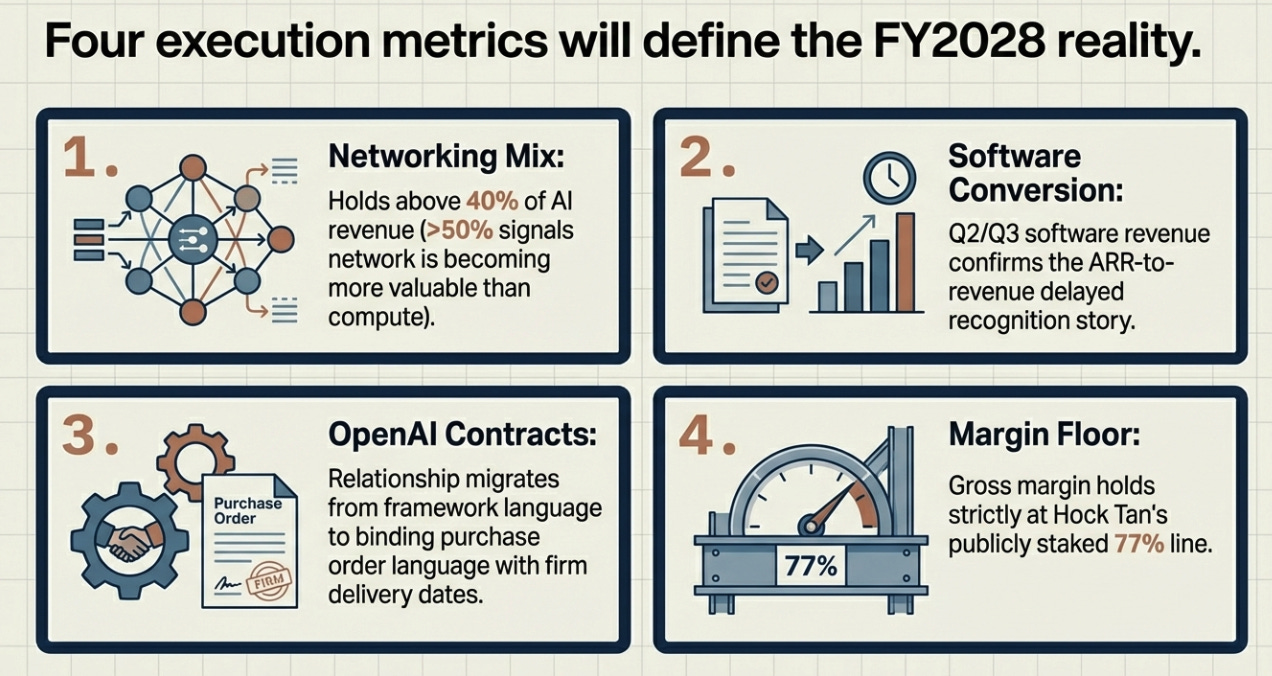

Four things will tell you which scenario is unfolding: whether AI networking mix holds above 40% of AI revenue (rising above 50% signals the network is becoming more valuable than the compute); whether Q2 and Q3 software revenue confirms the ARR-to-revenue conversion story; whether OpenAI’s relationship migrates from framework language to binding purchase order language with delivery dates; and whether gross margin holds at Hock’s explicit 77% floor, the line he has now publicly staked his credibility on.

The Asset Nobody Expected: Credibility

Our original view was that Broadcom was the disciplined adult in an AI market intoxicated by narrative, being penalized for refusing to blur the line between real orders and aspirational frameworks. That view was correct and the buying opportunity was real.

The updated view is more interesting. Discipline wasn’t just a personality trait that would eventually be rewarded by a market correction. It was the precondition for something more durable: the credibility to make a claim as large as $100 billion in a single year and have institutional investors treat it as a delivery schedule rather than a forecast. That credibility is now a competitive asset. It is why the six customers are willing to share multi-year roadmaps with Broadcom. It is why supply chain partners lock up capacity years in advance. It is why the COT programs that were supposed to displace Broadcom end up running through Broadcom.

The question is no longer whether Broadcom wins AI. It is whether the market understands that winning looks like becoming the industrial infrastructure that makes everyone else’s AI real, not a better chip vendor, but the prime contractor that turns ambition into physics. The $100 billion is not a promise. The supply chain is already booked.

Disclaimer:

The content does not constitute any kind of investment or financial advice. Kindly reach out to your advisor for any investment-related advice. Please refer to the tab “Legal | Disclaimer” to read the complete disclaimer.