Cadence 4Q25: Design Throughput Toll

AI Agents, and the Fight to Decouple Revenue from Headcount

TL;DR:

AI doesn’t eliminate Cadence, it increases the number of monetizable design iterations.

The upside comes from pricing throughput, not seats.

The risk isn’t failure, it’s success without pricing power.

AI was supposed to come for software. That was the dominant narrative through 2024 and into 2025, every enterprise software company faced the same existential question: does AI make your product more valuable, or does it make your product unnecessary? For most of the past year, the market decided that Cadence Design Systems fell into the latter category. The stock underperformed its Bloomberg peers by 36%. The logic seemed sound: if AI can design chips, why do you need expensive design tools?

Then Cadence reported Q4 earnings. The numbers were fine, they always are. FY25 revenue of $5.3 billion, up 14%. Non-GAAP operating margin of 44.6%. Record backlog of $7.8 billion. Guidance above consensus. But the stock didn’t jump 9.3% because of a beat-and-raise. It jumped because of a narrative shift buried in the earnings call, one that reframes the entire investment case around a single question that has nothing to do with the semiconductor cycle.

The question is this: can Cadence decouple its revenue growth from human headcount growth?

The Constraint

To understand why this matters, you must understand the structural cap on Cadence’s historical business model.

Cadence sells engineering software, the physics engines, simulation tools, and verification platforms that chip designers use to turn ideas into manufacturable silicon. Historically, this software was licensed per engineer. More engineers at your customer means more seats, which means more revenue. The growth rate of Cadence’s business was therefore tethered to the growth rate of hardware engineering headcount at semiconductor companies.

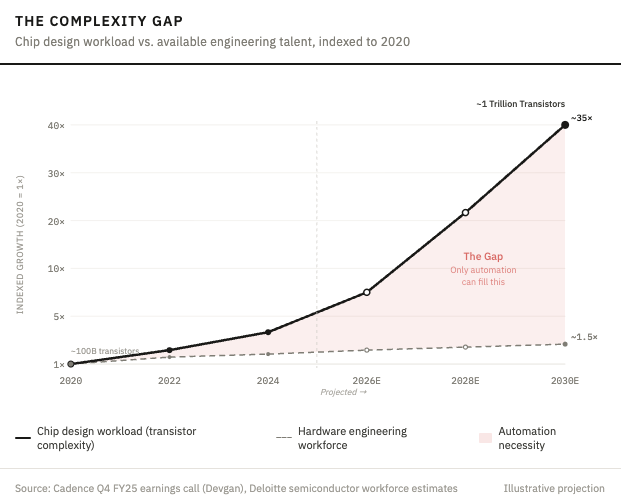

This worked until it didn’t. And it didn’t stop working because of competition, or recession, or some strategic error by management. It stopped working because physics collided with economics. CEO Anirudh Devgan stated on the call that chip complexity will grow 30 to 40 times over the next five years, trillion-transistor designs by 2030. You cannot hire 30 times more engineers. The talent doesn’t exist. You cannot accept 30 times longer development cycles either, because your competitor is NVIDIA shipping new architectures annually. The only solution is automation.

This is the context for ChipStack, the “agentic AI” product Cadence launched the week before earnings. ChipStack doesn’t help an engineer work faster. It writes the RTL code, generates the test bench, and verifies the logic autonomously. The software is the worker, not the assistant. And critically, it calls Cadence’s underlying physics engines thousands of times per run, a human engineer might explore dozens of design configurations; an agent explores millions.

Here is the business model question in its starkest form: is the customer paying for access to a tool, or paying for the output of that tool? If the former, Cadence is a subscription software company. If the latter, Cadence is a toll on design throughput. Those are fundamentally different businesses with fundamentally different economics.

The Jevons Paradox

The bear case is intuitive: AI makes engineers more productive, so companies need fewer engineers, so Cadence sells fewer seats. This is the deflationary argument, and it has been wrong about every prior wave of automation for the same structural reason.

When Amazon Web Services launched, the conventional wisdom was that cheap cloud computing would reduce total IT spending. Companies would need fewer servers, fewer data centers, fewer IT staff. The opposite happened. By making compute cheap and accessible, AWS didn’t shrink the market for computing, it created entirely new categories of demand that hadn’t existed before. Companies that never would have built their own data centers suddenly spun up thousands of instances. Startups that couldn’t afford infrastructure suddenly competed with incumbents. Total spending on computing exploded, and AWS captured the toll.

The same dynamic is unfolding in chip design. Five years ago, the only companies designing custom silicon were traditional semiconductor firms, Intel, AMD, Qualcomm, Broadcom. Today, Apple, Amazon, Google, Microsoft, Meta, and Tesla all design their own chips. Cadence noted on the call that a “marquee hyperscaler” adopted the full Cadence digital flow for its first customer-owned-tooling AI chip tape-out. System companies are becoming chip companies. By making chip design more accessible through automation, Cadence isn’t shrinking its market. It is expanding the number of participants who need its physics engines, and every new participant funnels through the same chokepoint.

Why Open Source Can’t Win Here

The obvious counter-argument is that if AI writes design code, open-source models could eventually commoditize Cadence’s layer. This misunderstands what Cadence actually sells.

The hallucination risk in hardware is categorically different from software. If an LLM hallucinates a line of Python, you debug it. If a chip design tool hallucinates a transistor gate at two nanometers, you lose a hundred million dollars and a year of fabrication time. There is no “move fast and break things” in semiconductor manufacturing.

Cadence’s AI isn’t trained on Stack Overflow posts. It’s trained on physics-compliant data co-developed with TSMC, Samsung, Intel, and Rapidus over decades, precision instruments calibrated to the exact chemical realities of each manufacturing process. A competitor cannot replicate this by writing better code. They would need a decade of shared proprietary foundry data to match the baseline. The moat isn’t the AI model. The moat is the integration of that model with the immutable laws of physics and the proprietary manufacturing rules of the world’s most advanced fabs.

The China export episode in May 2025 proved this accidentally. When tools were restricted for one month, Cadence didn’t cut guidance, they raised it. Global customers didn’t evaluate alternatives. They accelerated purchases and paid premiums to secure access. That’s not how people respond when optional software becomes unavailable. That’s how they respond when essential infrastructure goes down.

The Consensus is Reasonable, and Possibly Wrong

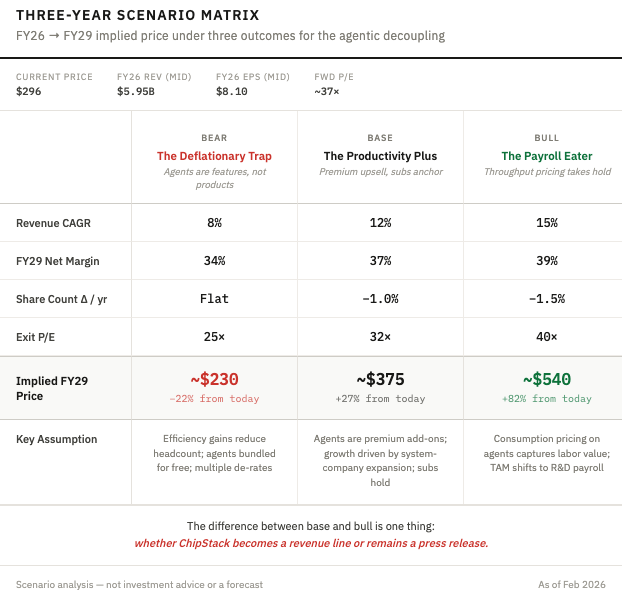

Here is the thing about the consensus view on Cadence after this print: it makes perfect sense. You have a duopoly business with a record $7.8 billion backlog covering two-thirds of next year’s revenue. You have low-teens guided growth. You have 45% non-GAAP operating margins. You have deepening foundry partnerships with every major advanced manufacturer. At 37 times forward earnings, you’re paying a full price for a high-quality compounder, but you’re getting genuine visibility and genuine defensibility. A reasonable investor would look at this and conclude: great business, fair multiple, hold and compound.

But there’s something odd about how Devgan described ChipStack’s pricing. The language wasn’t “feature upgrade” or “next-generation tool.” It was “virtual engineer.” “Force multiplier.” “10x productivity improvement.” This is labor language, not software language. And the customer endorsements, NVIDIA, Qualcomm, Altera, Tenstorrent, aren’t evaluating a tool. They’re evaluating capacity.

If Cadence prices ChipStack like labor replacement rather than a software subscription, the TAM shifts from what customers spend on EDA licenses to what they spend on chip design payroll. The software budget is capped. The R&D payroll budget at hyperscalers designing custom AI silicon is enormous and growing. The consensus is modeling the old business, seats, contracts, renewals. The variant view is that Cadence is becoming something else entirely: a toll on the exponentially growing volume of design work that AI both demands and enables.

The risk isn’t that ChipStack fails. It’s that it succeeds too well, becomes so obviously necessary that procurement treats it like email, not like a negotiation. If agents get bundled into existing contracts at no incremental price, Cadence gave away the productivity gains and the stock remains a low-teens compounder at a full multiple. Pricing power is the entire ballgame.

What the Numbers Say (and Don’t Say)

The infrastructure thesis needs clean numbers to sustain a premium multiple. Three items deserve scrutiny.

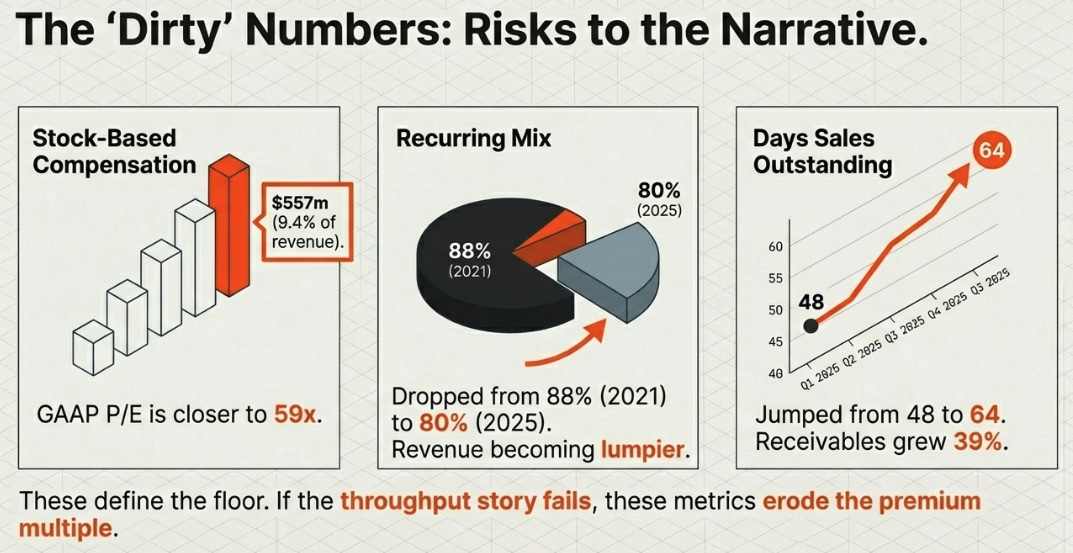

Stock-based compensation grew to $455 million in FY25, or 8.6% of revenue, and is guided to $557 million, 9.4%, for FY26. The GAAP P/E is roughly 59 times, not 37 times. That gap is widening, not narrowing.

Recurring revenue as a share of total has declined from 88% in 2021 to 80% in 2025. IP and hardware are growing faster than recurring software, good businesses, but lumpier. The market is paying for predictability at the exact moment the revenue mix is becoming less predictable.

Days sales outstanding jumped from 48 to 64. Receivables grew 39% against 14% revenue growth. Management guided normalization. Perhaps it’s timing. But these are the kinds of trends that erode confidence if they persist.

None of these break the thesis. But they define the floor if the throughput-pricing story doesn’t materialize.

What to Watch

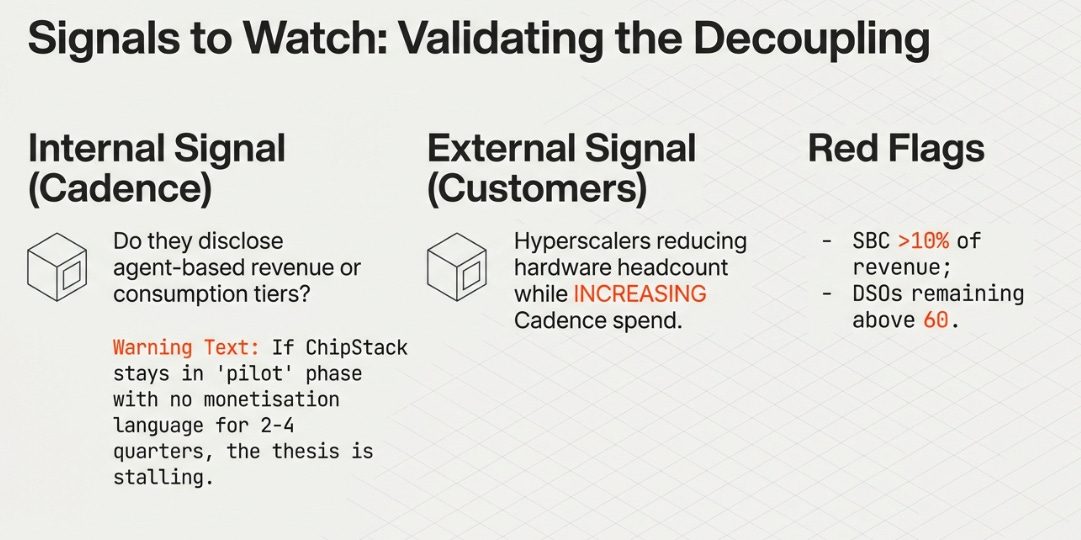

The thesis will be confirmed or killed by a small number of observable signals. The most important: does management start disclosing agent-based revenue or consumption-based pricing tiers? If ChipStack stays in the “endorsements and pilots” phase with no monetization language within two to four quarters, the decoupling isn’t happening.

The external signal matters too. Watch whether hyperscalers reduce hardware engineering headcount while increasing Cadence spend. That’s the decoupling made visible in someone else’s income statement. And watch the earnings quality: SBC above 10% of revenue impairs the margin thesis; DSOs above 60 again next quarter means the deterioration is structural; recurring mix below 78% reopens the cyclicality debate.

The Bigger Question

What makes Cadence interesting beyond Cadence is that the agentic decoupling isn’t a question unique to EDA. Every seat-based vertical software company, Autodesk, Ansys, PTC, arguably even Salesforce, will face the same structural question as AI agents become capable of doing the work their tools were designed to assist with. Can you charge for the output rather than the access?

Cadence may be the first to answer this question at scale because EDA has something most vertical software lacks: a physics constraint that makes the underlying engine irreplaceable. You can’t hallucinate your way through a two-nanometer tape-out. That gives Cadence pricing power that a CRM or a CAD platform probably won’t have when their own agentic moment arrives.

At 37 times forward earnings, the market is paying for a great software compounder. If the agentic decoupling works, that’s cheap for an infrastructure monopoly pricing the design bottleneck. If it doesn’t, it’s a fair price for what Cadence has always been.

The bottleneck has moved to design throughput. The only question is whether Cadence will price the bottleneck, or just make it more comfortable to sit inside.

Disclaimer:

The content does not constitute any kind of investment or financial advice. Kindly reach out to your advisor for any investment-related advice. Please refer to the tab “Legal | Disclaimer” to read the complete disclaimer.